I am thus far primarily a Mac developer, though I have dipped my toes in the iOS development arena many times in the — sheesh! — 5 years since iOS 2.0 shipped with its developer-facing SDK.

My first, and only shipping app for iOS is Shush, a static noise generator that was inspired by my son Henry’s birth. He was born in August, 2008, months after iOS had been opened to the public. As you might imagine, I didn’t have a lot of spare time to play around with iOS programming, but I did have a screaming baby. For those of you who don’t know, static noise is famously soothing to small babies. Shush 1.0 was my bare-bones solution for dispensing infinite, soothing static noise from the magical device I could hold in my hands:

Pretty hot, huh? It did the trick. I would hold crying, months-old Henry against my chest and, with the iPhone quietly shushing in my hand, he would drift off to sleep.

I mostly forgot about Shush after Henry got old enough to no longer benefit from it. Fast-forward 3 years and my second son, Matthew was about to be born. I realized I was going to need to dust off the old soothing machine, and it seemed like a great excuse to finally brush up the UI a little.

There isn’t much reason to look at the screen while using Shush: its primary purpose is generating audio noise. But despite Shush 1.0’s extremely minimalist design, I had always imagined the app was a prime candidate for a skeuomorophic design. In the old days of analog television, a common technique for generating this sound was simply to tune to an unused station. While the audio of the room filled with static white noise, the screen similarly rumbled with visual black-and-white “snow.” I thought it would be pretty cool to simulate this on the iPhone, as a throwback to those nostalgic days and so a Shush user would have something vaguely interesting to gaze at if they chose to.

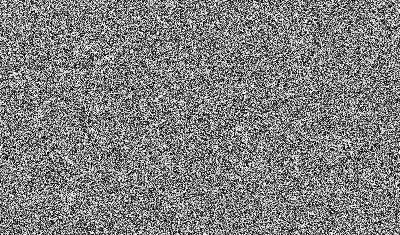

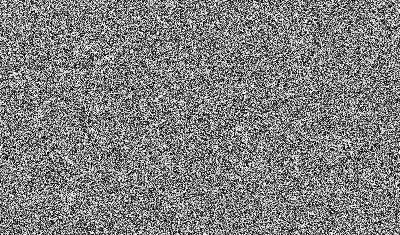

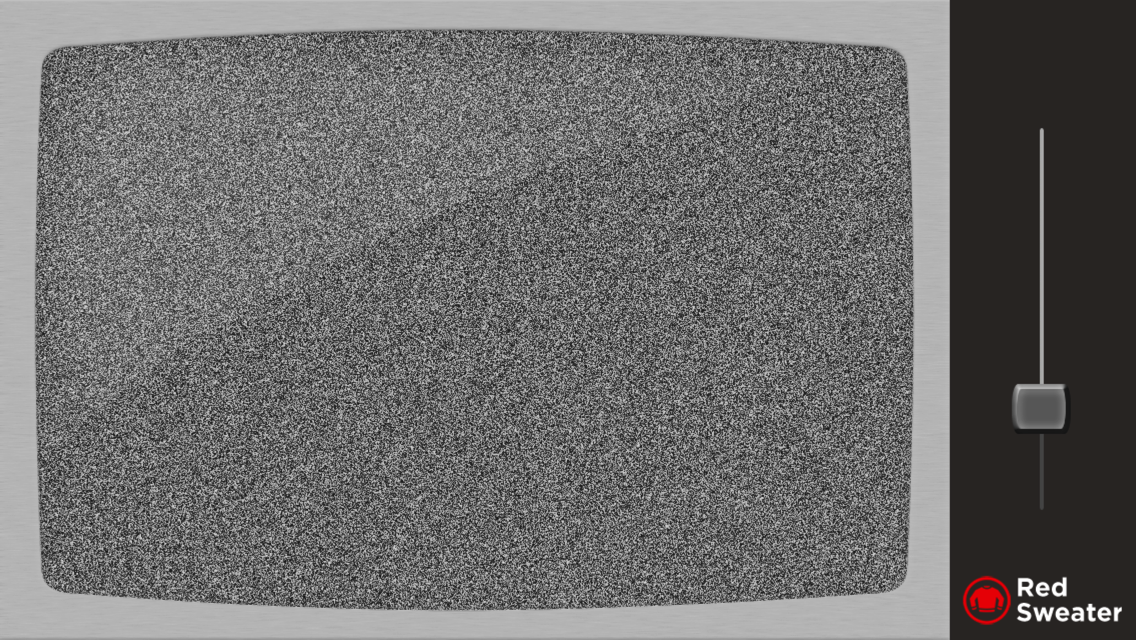

It turns out, simulating the static television snow of my analog youth is extremely challenging to do, even on a fancy iPhone. Generating audio white noise is relatively easy: you can get close to the desired output by simply taking random numbers and feeding them to the audio system as samples. It seems reasonable to assume you could do the same for video. For each frame, you could simply generate a random grey between 0.0 and 1.0 for each pixel, rendering the result to an image:

This yields a pretty TV-snow-like image:

The problem is it’s incredibly CPU intensive to calculate that many random numbers and construct an image. Even testing this naive approach again today on my relatively speedy iPhone 5, the naive approach produces an animation where the frames only alternate every 3 seconds or so. Clearly, this would not do.

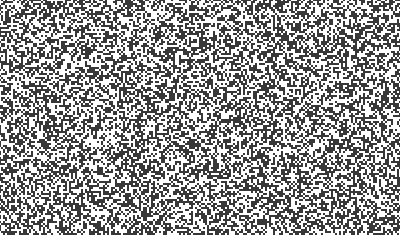

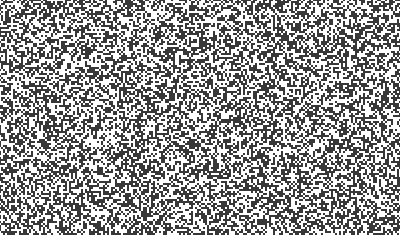

I experimented with a variety of approaches to speed up the rendering. What if I didn’t generate a wholly random number, but just alternated between 0 and 1? Also, do I really need to generate a random value for every pixel? What if I clump the pixels together to cover more ground? I tried a variety of techniques to speed up the view drawing:

The result was faster, but still not fast enough. What’s worse? It looked more like an homage to a Commodore 64 than to a vintage analog television set:

I was about ready to throw in the towel. Maybe this was simply not possible on an iPhone. I did some research on the web and it was not promising: not only is this a hard problem on an iPhone, it’s a hard problem everywhere. I learned that I’m not the first person who tried to generate an approximation of visual static on a digital computer, and that most people eventually resort to using a canned video animation of static. Modern television sets typically do this to give you the old-timey sense of “nothing is plugged in,” but if you look closely you can see repeating patterns in the static. In other words, it’s not really random. It’s not really static. If I couldn’t make this strange, skeuomorphic homage look more or less like real TV static, I was not interested in the challenge.

Up to this point all of my efforts had been at the level of the CPU: how can I fill this image buffer with random pixels faster? Having no experience with OpenGL or directly programming a GPU, I hadn’t even considered the possibility of approaching that. But a conversation with my friend Mike Ash put the idea in my head, and I ran with it. Since iOS devices are famously optimized for leveraging the GPU, I figured it might be a simple matter of asking the GPU to generate the random pixels for each frame on the fly, obviating the need for the generation of any CPU-bound image data.

I gave myself a crash course education in OpenGL, learning the bare minimum I needed to know before tackling the problem. To give you an idea of where I was starting, I had heard the term “shader” before, but honestly had no idea what it did. I eventually learned that probably what I needed was a bit of OpenGL magic called a “pixel shader.” Essentially it’s a chunk of code that runs on the video card and gets to choose what color each pixel in a given scene should be. For my scenario, I would be setting up OpenGL with a sheer surface pointed directly at the camera, so as to appear 2D. The shader’s job would be to fill that 2D surface up with random gray pixels.

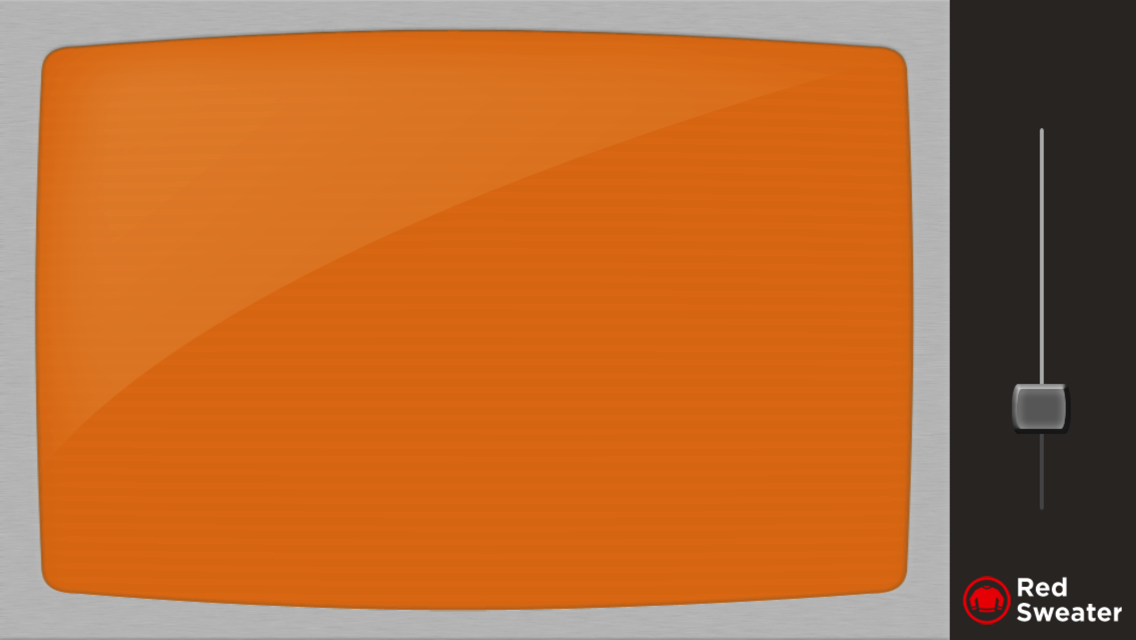

Using Apple’s GLKViewController, I was able to skip over much of the hardcore OpenGL setup, and skip right to the work on the shaders. I used some boilerplate code to get my GLKViewController wired to my pixel shader, and was able to e.g. demonstrate my ability to fill the surface with a specific color:

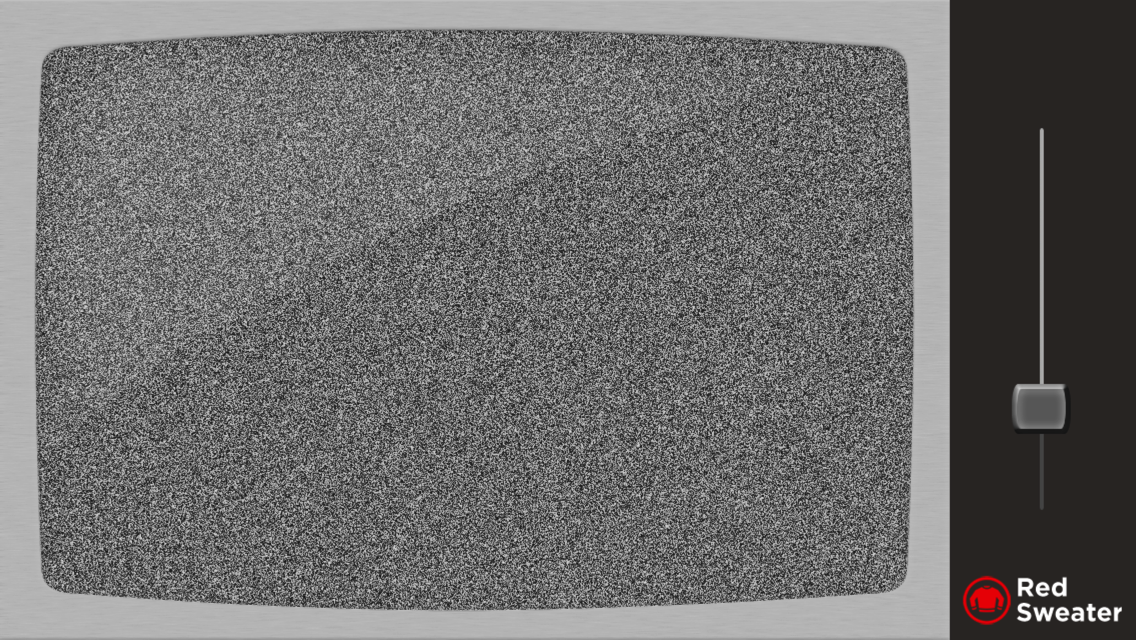

It works! And while I was working out how to make OpenGL do my bidding, I put some work into the TV frame appearance for the app:

At this point, it feels like I’m almost home. I just need to swap out the constant RGB values for ones that insert random values of gray. What’s that you say? There’s no random generator in OpenGL? Well, I’ll be damned.

Once again things appeared hopeless. I played around with the addition of a “vertex shader,” which is a shader that has access to additional information about the scene. Using the fact that a vertex shader and pixel shader can communicate with each other, I was able to incorporate the specific coordinate for the pixel being shaded. Scouring the web, I found example code for OpenGL that would take a varying number like this and “fuzz” it sufficiently that it appeared to be somewhat random. Thus, my next effort involved taking the x and y coordinates for the current pixel and transforming them into a seemingly random shade of gray:

Oh my god! It’s beautiful. We’re done, this is exactly what I’ve been striving to do for weeks, now. Except… it doesn’t animate. It’s just a rendered scene of random grey pixels in which the random grey pixels are always exactly the same as before. Why? Because the inputs to the pseudo-random fuzz function are always the same: the coordinates of each pixel in the scene.

My final stroke of insight was to inject “just enough randomness” into the scene by hooking up a value that the pixel shader obtains from the client app. If I can supply random numbers to shader, you may ask, what’s the big deal? Why not just supply all the random numbers? Because the facility for injecting values into the shader only gives the client app access once per complete rendering. Once it starts rendering, the determination of values for each of the pixels in the scene is completely up to the shader itself. But by combining the pseudo-random generation based on pixel coordinate, and further fuzzing that value with a random value injected once per rendering, the results are as in the image above, but beautifully, quickly rendered (video capture doesn’t do it full justice).

Here is a final code snippet of both the vertex and fragment shaders. You can pop them into a project in Apple’s OpenGL Shader Builder to get a better feel for how they work.

On January 17, 2012, I released Shush 2.0. The next day, Matthew was born. It worked great for the few months that I needed it, and just as I did before, I have since mostly stopped using the app myself. However, it was a great exercise in pushing the limits of what the iPhone seemed capable of doing. Hopefully this experience will inspire you to look deeper for solutions to the problems that vex you while working with these fascinating, limited devices.