I recently came across a perplexing crash in my unit tests that led me down a path of discovery, culminating in an awareness that “I was holding it wrong” when it comes to using NSLog in Swift.

Here is the source code to an extension on FileManager intended to make it easy to read the last modification date of a file:

@objc(rsLastModificationDateAtURL:)

func lastModifedDate(at url: URL) -> Date? {

do {

let resourceValues = try url.resourceValues(forKeys: [.contentModificationDateKey])

return resourceValues.contentModificationDate

} catch {

NSLog("Failed to get modification date at URL: \(url) - \(error)")

return nil

}

}

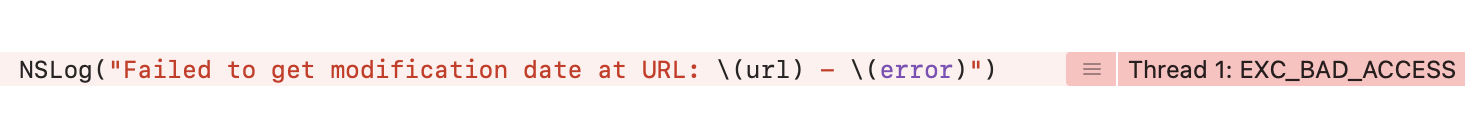

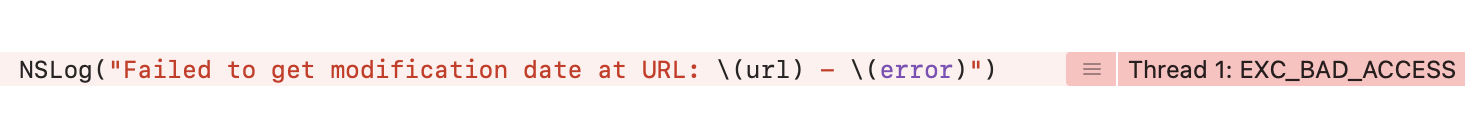

I’ve highlighted the line of interest, an invocation of NSLog in the case where a modification date could not be read because of some exception. For example, if the file were not found this line of code would be reached. Looks innocent enough, right? Here’s what I came up against while running the unit tests for one of my apps:

At first I thought that one of the url or the error variables must be bad. After all, Swift string interpolation and NSLog are both battle-tested. Surely if there were a way to easily crash by logging good variables, it would be fixed a long time ago. But no, both of the variables in this instance were perfectly valid references. So what gives?

The Problem

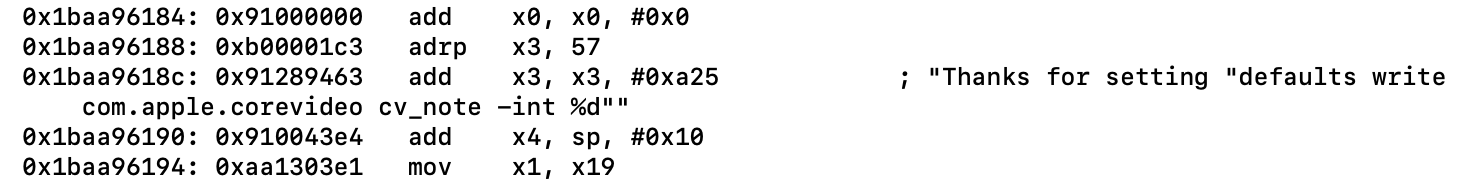

The problem is rooted in the fact that NSLog from Swift calls through to the underlying C-based interface which supports template strings and variadic arguments. This is why, for example, you can invoke NSLog(@"Hi %@", name) in Objective-C and, if name represents the string “Daniel”, you get “Hi Daniel” printed to the console.

In the scenario of my crash, the interpolation of “url” and “error” results in surprise template placeholders, because of the presence of spaces in the path that the URL represents. In my tests, a file called “Open Program Ideas File” exists and is used to test some file manipulations. When the path to this file is encoded as a URL, the name of the file becomes “Open%20Program%20Ideas%20File”. Since the error’s failure message reiterates the name of the file it couldn’t find, we end up with another copy of the percent-escaped string in the parameter to NSLog. Each instance of “%20” followed by a letter is liable to be interpreted by NSLog as a printf-style format specifier. So as far as our filename’s string is concerned, we have “%20P”, “%20I”, and “%20F” threatening to cause trouble. Capital letters in printf formats are pretty uncommon, but the %F format specifier is documented as:

64-bit floating-point number (double), printed in decimal notation

In short, we have a situation where logging the URL and the error inadvertently asks NSLog to look for a 64-bit floating point number in the arguments list. A 64-bit floating point number of size 20, whatever that means in this case. So, whether the unit test, or app, crashes or not in a situation like this depends entirely on what data happen to be in the places where the variadic arguments would be, and whether that data causes a crash when attempting to interpret it as a type of the specified print format.

How to Fix It

So how do we fix it? Well, we need to make sure that the string that ultimately gets passed to NSLog doesn’t contain these surprise placeholder values. If this were Objective-C, we wouldn’t run into the problem because the parameters would need to be passed as variadic arguments:

NSLog(@"Failed to get modification date: %@ - %@", url, error);

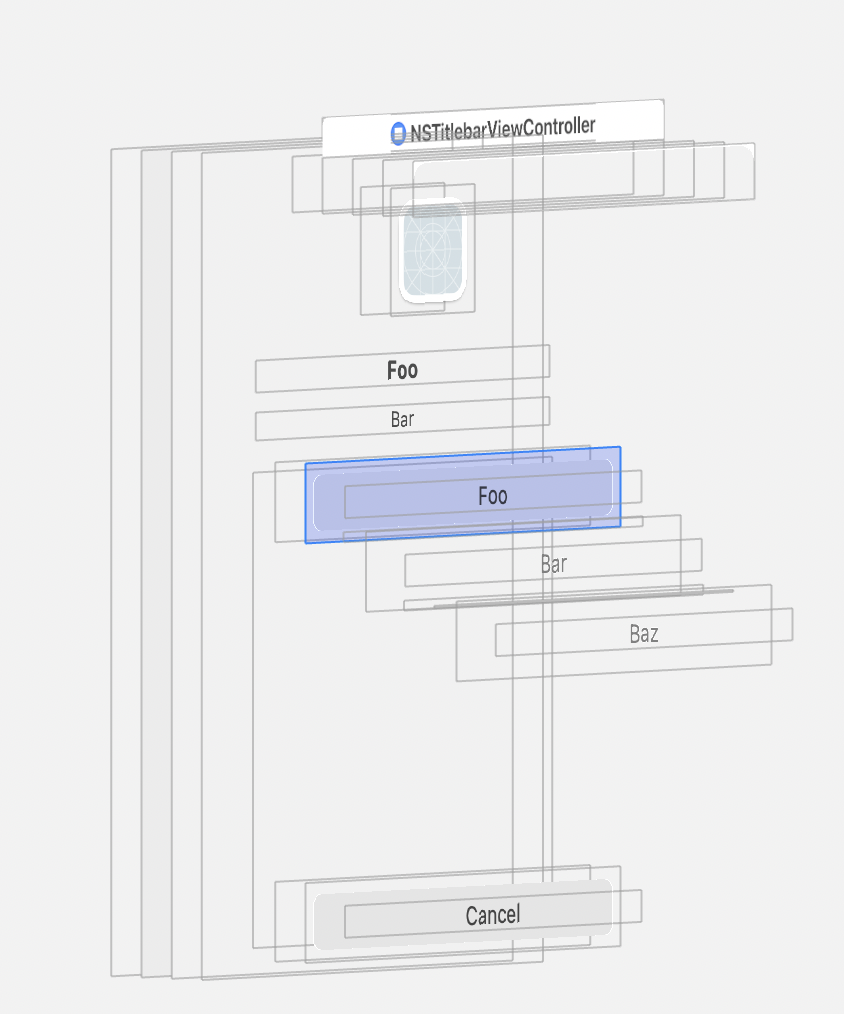

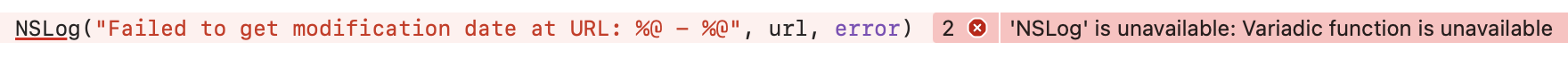

But if we try that in Swift, we run into trouble. NSLog in Swift doesn’t support variadic arguments:

I’m pretty sure this is how I ended up in this situation in the first place: I probably tried to adapt my old Objective-C code to Swift, ran into this error, and obediently changed to using inline variable substitution instead. It just seemed like “the Swift thing to do.” But if we can’t use NSLog with inline substitution, and we can’t use variadic arguments, what are we supposed to do? Update: Thanks to Sebastian Celis for pointing out on Twitter that variadic arguments do work with NSLog. I think the failure above is specifically because I’m passing non-NSObjects to NSLog, which isn’t supported.

A few years ago Apple introduced a new logging framework called the Unified Logging System, which supports logging with variadic arguments from both Swift and Objective-C. It also supports a host of other features that allow you to categorize and prioritize your log message and, well, it sounds great, but it’s also quite a bit more complicated than just invoking NSLog. It’s complicated enough that I can’t offer a one-line fix for the situation I’ve described here, but I encourage you to investigate your options.

Audit Your Code

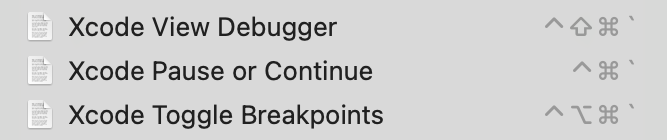

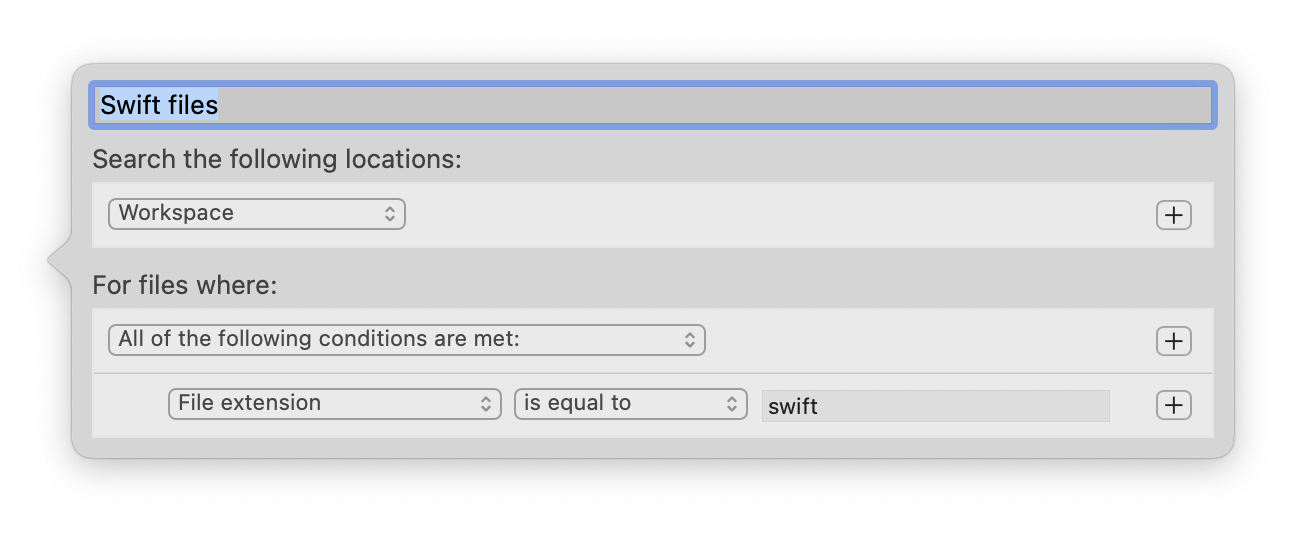

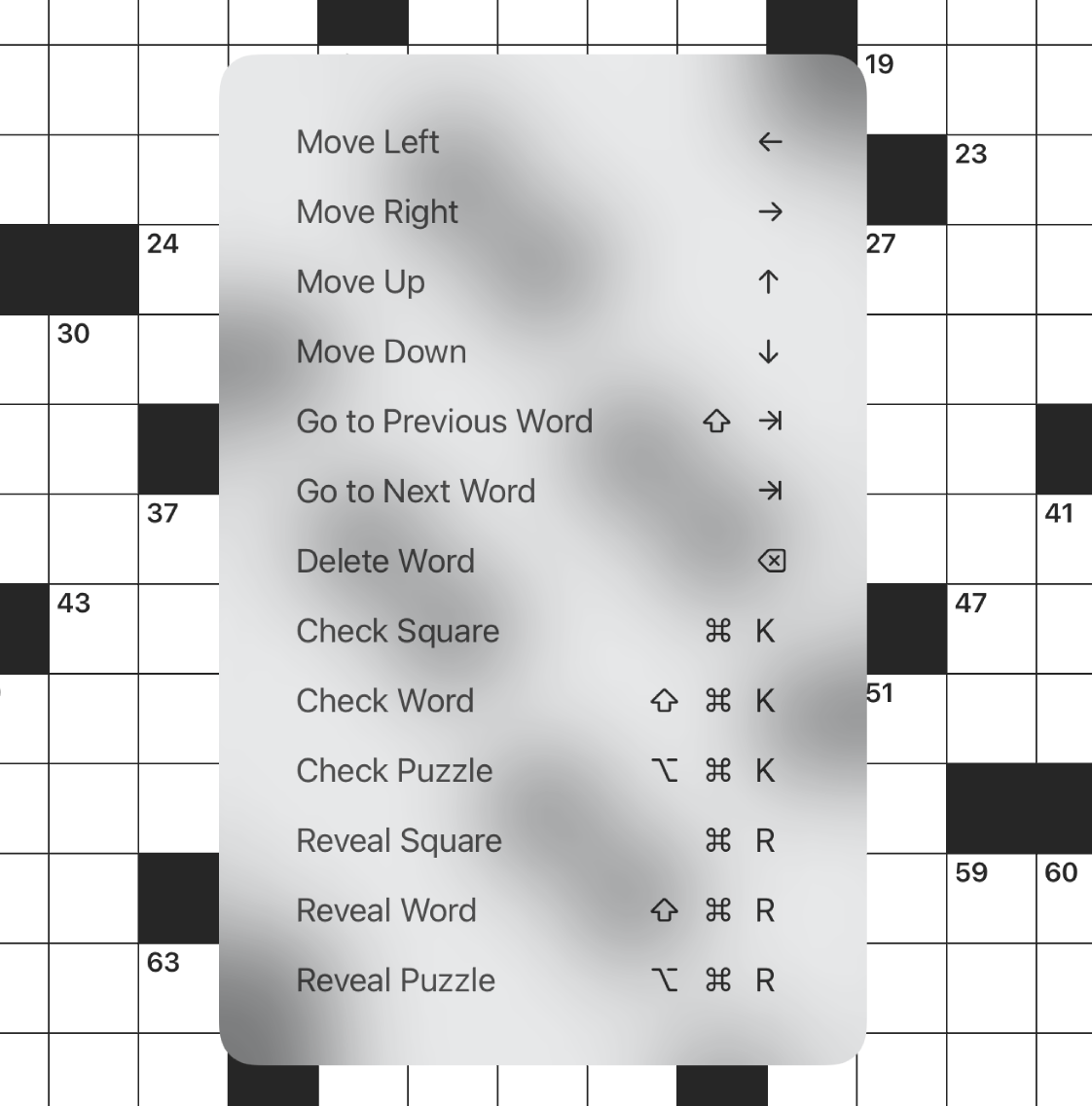

I also encourage you to audit your own code, and I’ll describe a method for searching your own code base to see if you might be vulnerable to the kind of unpredictable crash that inline interpolation can cause with NSLog. In Xcode, create a custom search scope that allows you to easily search just the Swift files in your code base, then search for instances of the problematic pattern. Obviously if you’ve got a 100%-Swift code base, creating the custom search scope is not necessary:

- Show the Find Navigator by selecting View -> Navigator -> Find from the menu bar.

- Click the “In Project” (by default) search scope label beneath the search field. This reveals the list of search scopes currently configured in your workspace.

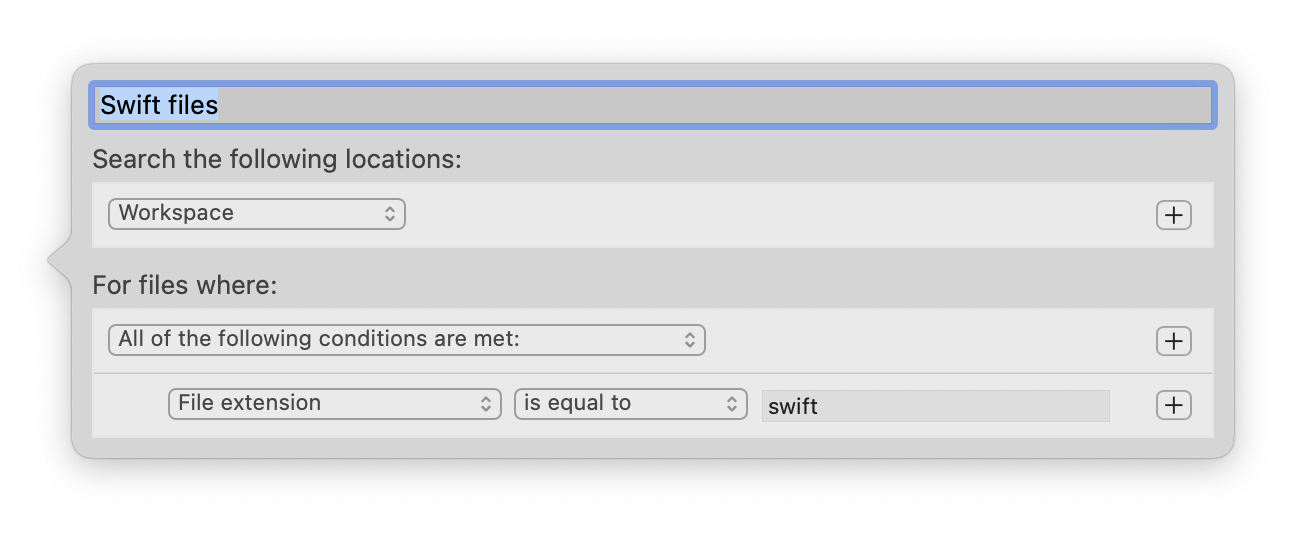

- Click the “New Scope” button and configure a scope designed to match all files with the “swift” file extension”:

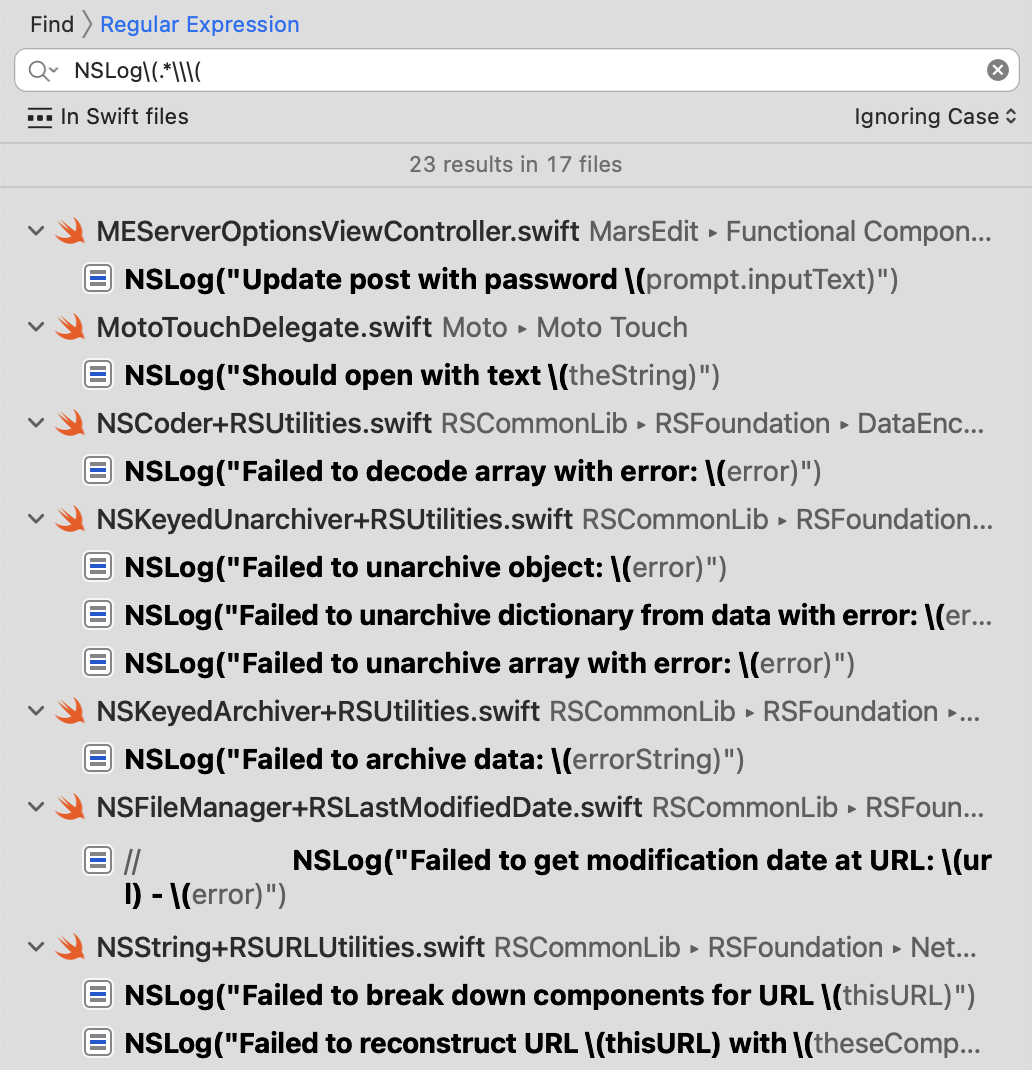

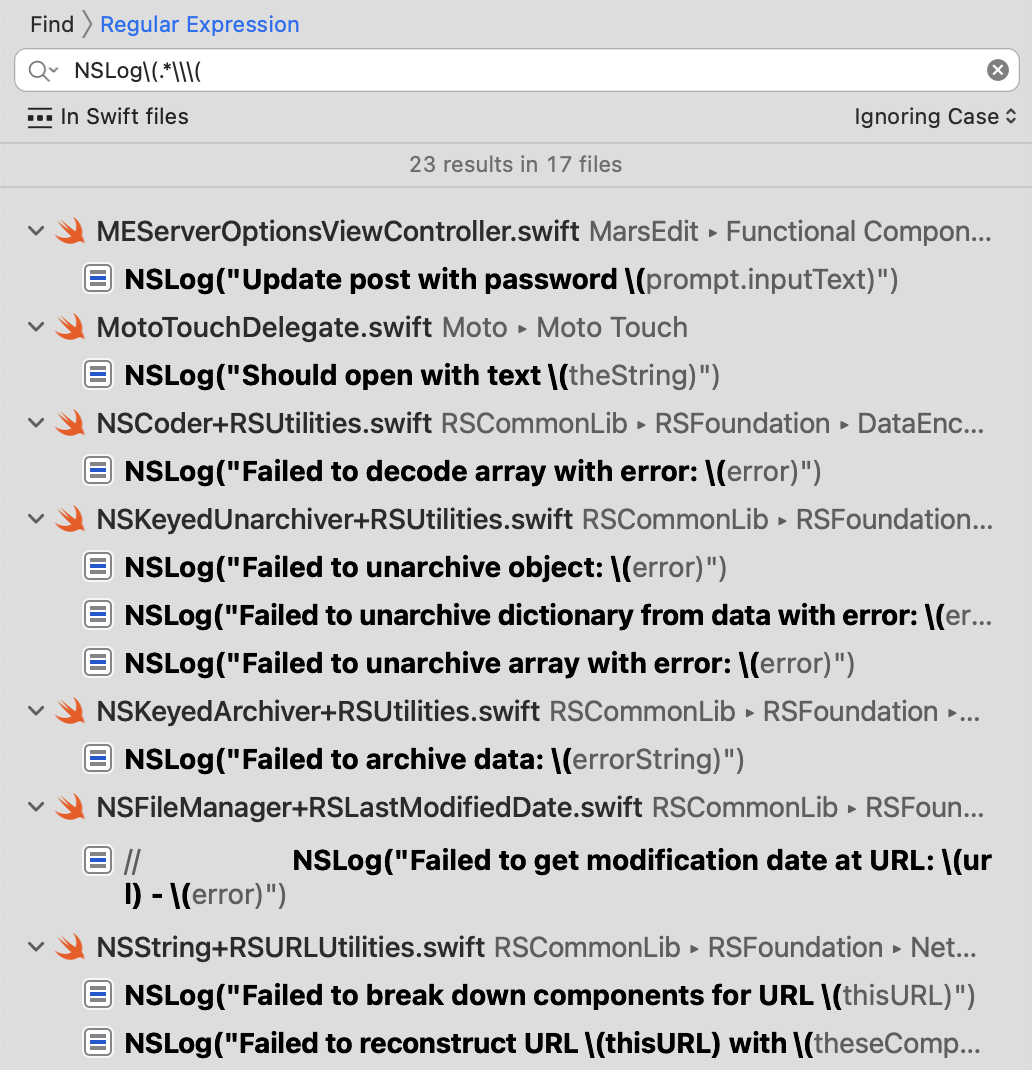

- Click the Find type (“Text” by default) in the search parameters above the search field, and change it to “Regular Expression”.

-

Type or paste in the search string NSLog\(.*\\\( — this is a regular expression that will find intances of the string “NSLog(” followed by any number of characters that includes the substring \(, the tell-tale sign of Swift string interpolation:

In my own code base, this audit results in an alarming 23 instances in 17 files, all of which I will soon be converting to a safer logging method. I encourage you to do the same in your code base. Hopefully you’ll have been followingn a safer pattern all along and won’t have any work to do, but if you’re anything like me, you might find you’re more vulnerable to the problem than you thought!