I am the kind of developer who leans heavily on automated systems for the improvement of my code. For this reason I tend to enable the maximum number of warnings, rely upon static analysis to discover nuanced bugs, develop and run unit tests to automatically confirm expected behaviors, and I encourage my apps to crash hard if they are going to crash at all.

I would characterize all of these behaviors as part of an overall attitude of vigilance against software defects. Learning about problems in code quickly and clearly is a key step maintaining an overall high level of code quality.

Most of my software is developed in Objective-C, which enables me to leverage compile-time and run-time tools from Apple that catch my errors quickly. Between compile-time warnings and errors, and runtime exceptions and crashes, the vast majority of my programming errors in Objective-C are caught within minutes of writing them.

On the other hand, MarsEdit features a rich HTML text editor largely implemented in JavaScript, a language which tends not to afford any of these convenient alerts to my mistakes. I have often stared in bewilderment at the editor window’s perplexing behavior, only to discover after many lost minutes or hours, that some subtle JavaScript error has been preventing the expected behavior of my code. I’ll switch to the WebKit inspector for the affected WebView, and discover the console is littered with red: errors being dutifully logged by the JavaScript runtime, but ignored by everybody.

Wouldn’t it be great if the JavaScript code crashed as hard as the Objective-C code does?

In fact, these days mine does. By a combination of JavaScript hooks that are alerted to the errors, Objective-C bridges that propagate them to the host app, and the judicious use of undocumented WebKit Inspector methods, development versions of my WebView-based apps now automatically present an inspector window the moment any error occurs in the view’s underlying JavaScript code.

For those of you who also develop JavaScript-heavy WebKit apps, I’ll share the steps for enabling this same type of behavior in your app. These steps all apply to Mac-based WebViews, but most of the same techniques could be used with iOS if you are willing to use some private methods to establish a bridge between the WebView and host app (for debug builds only, of course).

Step 1: Catch the error in JavaScript.

In whatever the main source content is for your WebView-based user interface, add some script code to register a callback for JavaScript errors:

<script type="text/javascript">

function gotJavaScriptError(errorEvent)

{

console.log("Error: " + errorEvent.message);

}

window.addEventListener("error", gotJavaScriptError, false);

</script>

Now if you encounter some error and happen to be attached with the web inspector, you’ll see additional confirmation that the error has in fact been noticed. if you’re following along in your own code while you read, I strongly encourage you to add a testing mechanism for generating errors. A simple “click” event callback (or “touchstart” on iOS) does the trick well:

<script type="text/javascript">

function gotClick(theEvent)

{

blargh

}

window.addEventListener("click", gotClick, false);

</script>

Now when you click, you blargh. And when you blargh, the error is logged. Of course, just logging the error isn’t very useful because it’s quiet and goes ignored. Just like JavaScript errors usually do…

Step 2: Propagate the error to the host app.

You could do some interesting stuff in the WebView itself. For example you might convert the error into a string and display a prominent alert message. But since there’s so much more you can do once the host app is in charge, it’s worth going the extra mile for that functionality.

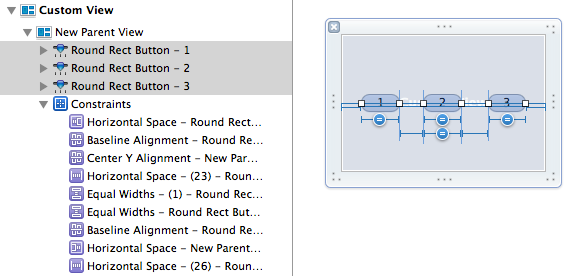

To tie the host app to the WebView we need to wait for the WebView’s frame to finish preparing its “window script object,” and then set a named attribute on that object that refers to an Objective-C instance. See that your WebView has a frameLoadDelegate configured, and within that delegate object implement this method:

- (void)webView:(WebView *)webView

didClearWindowObject:(WebScriptObject *)windowObject

forFrame:(WebFrame *)frame

{

// Expose ourselves as a JavaScript window attribute

[[webView windowScriptObject] setValue:self forKey:@"appDelegate"];

}

At this point any JavaScript code, including our error-handling callback, can reference the Objective-C delegate directly. But in order to make good use of the delegate we’ll have to add a couple other methods. The first lets the WebKit runtime know that we are not fussy about which of our methods are accessible to the WebView:

+ (BOOL) isSelectorExcludedFromWebScript:(SEL)aSelector

{

return NO;

}

Now JavaScript code within the WebView can call any method it chooses on the delegate object. Implement something suitable for catching the news of the JavaScript error:

- (void) javaScriptErrorOccurred:(WebScriptObject*)errorEvent

{

NSLog(@"WARNING JavaScript error: %@", errorEvent);

}

And back in your WebView HTML source file, amend the JavaScript error handler to call through to Objective-C instead of pointlessly logging to the JavaScript console:

<script type="text/javascript">

function gotJavaScriptError(errorEvent)

{

console.log("Error: " + errorEvent.message);

window.appDelegate.javaScriptErrorOccurred_(errorEvent);

}

...

</script>

Notice that the colons in Objective-C method names are simply replaced with underscores to make them compatible with the JavaScript function naming rules. The errorEvent argument in this case is translated to a DOMEvent object instance in Objective-C, where it can be further interrogated. Alternatively you could pull the parts of the event you want out in JavaScript, and pass along only those bits.

Now we’ve done a considerable amount of work, only to upgrade our easy to ignore JavaScript errors from littering the web console to littering the system console. At least we’d notice these errors in the Xcode debugger console, and might eventually get around to taking a closer look. But we can still do much better.

Step 3: Get face to face with the error.

Of course from Objective-C there are any manner of ways you could handle the error. In a shipping app it might make sense to prompt the user with news of the issue and offer, much like a crash reporter would, to send information about the error to you. Or if the contents and behavior of your WebView are critical enough, maybe it’s worth forcing the app to quit just as it would for a native code crash. But for debugging builds I’ve find it very helpful simply to have the error force the Web Inspector open so I am no longer able to quietly ignore all the red console logging. In the delegate class, go back to the javaScriptErrorOccurred: method and replace the NSLog call with this string of fancy mumbo-jumbo

- (void) javaScriptErrorOccurred:(id)errorEvent

{

#if DEBUG

id inspector = [[self webView] performSelector:@selector(inspector)];

[inspector performSelector:@selector(showConsole:) withObject:nil];

#endif

}

That’s it. Now when you run into a WebView-based JavaScript error, the web inspector appears and the list of pertinent errors is front-and-center.

I encourage you to leave the DEBUG barrier in place, because as the prolific use of “performSelector” may suggest, these are private WebKit methods that would probably not be viewed as acceptable by e.g. App Store reviewers. Anyway, you probably don’t want customers being pushed into the Web Inspector.

I hope this technique proves useful for those of you with extensive JavaScript source bases of your own. For everybody else, perhaps I’ve helped to drive home the idea that we should be as vigilant as possible against software defects. All developers write bugs, all the time. It’s only fair that we try to balance the scales and give ourselves a fighting chance by also being alerted to the bugs we write, all the time.